Jumping on this thread...

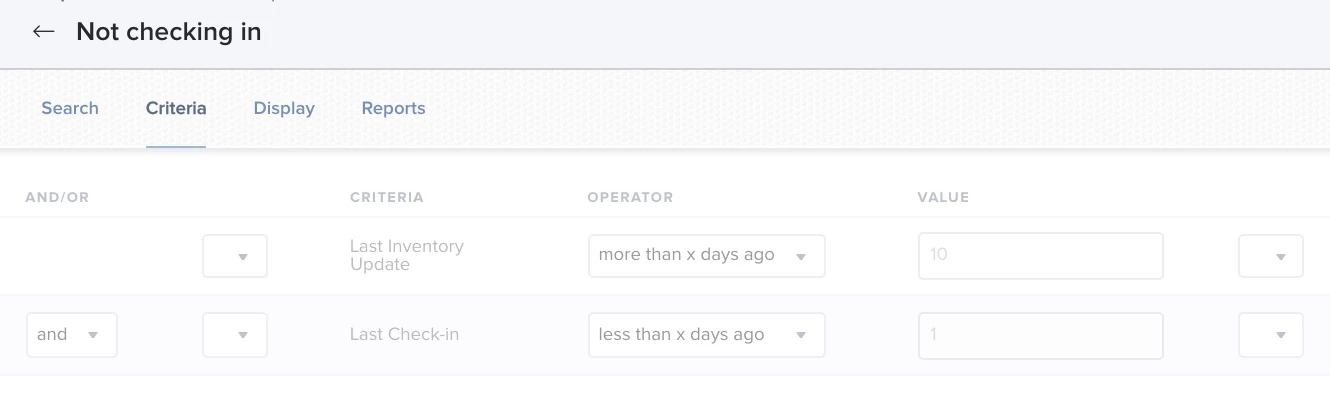

Random endpoints managed by our Jamf Pro instance are not checking in/performing an inventory update for even more than 10 days.

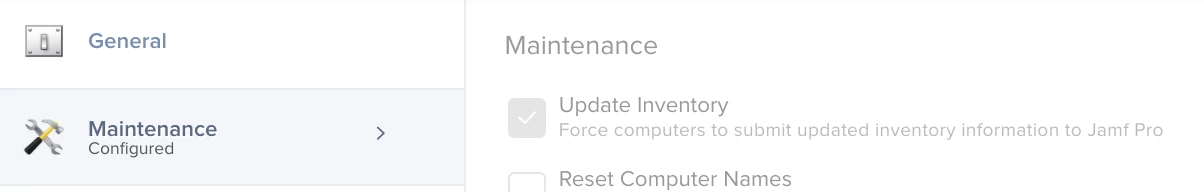

Our ad-hoc solution is to request the user to run a policy from the Self Service app which forces Inventory Update (See attached screenshot. Policies -> Maintenance -> Update Inventory)

Does anyone have an idea regarding what causes it and how do we eliminate the issue for the long term?