- Jamf Nation Community

- Products

- Jamf Pro

- Issues with MDM after 9.101 upgrade

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

Issues with MDM after 9.101 upgrade

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Posted on 09-26-2017 08:15 AM

Hi everyone,

I'm having a strange issue with MDM commands in our production environment since upgrading from 9.99 to 9.101. I have a ticket in with support, but I thought I'd post this on the off chance that someone has seen a similar issue and has any suggestions.

I have a test and production environment setup identically. Servers run RHEL and each environment has an internal JSS and a clustered external JSS for client communication only. I upgraded our test environment to 9.101 a couple of weeks ago and everything worked fairly well so I upgraded our production environment last week.

Immediately after upgrading our production environment, I noticed that our MDM commands were extremely slow. By slow, I mean 20+ minutes for something to complete (if it completed at all). I tested out communication with the APNS, which seemed fine. Our test environment was still working, and moving devices from production to test resulted in MDM functioning normally.

On iOS devices, I noticed that if I ran a VPP app install from Self Service, it would immediately run any pending MDM commands. So, for example, if I initiated a lock device command for an iPad, it would be stuck on pending. But, if I then went into Self Service and installed any VPP app, the lock command would immediately kick in.

On macOS devices, I tried running various commands, including "jamf manage", but nothing would kick off the pending commands. The only thing that seems to work is to reboot the computer. The pending MDM commands run as soon as the reboot is finished and network is re-established.

Everything else seems to be working normally. Devices check in, run policies, and submit inventory. It just seems like something went wrong during the upgrade.

If anyone has any suggestions, let me know.

Thanks!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Posted on 09-26-2017 08:17 AM

I saw a very similar problem recently. Are you running a cluster? Do you get MDM command slowness when running command(s) from a non-master JSS?

Edit: Since you do have a cluster, try temporarily setting your secondary JSS to full access. Then try sending MDM commands from that one. If that works, try bringing up a third JSS and use that one as your master. Then maybe even change DNS around so that clients and end users aren't using the master JSS.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Posted on 09-26-2017 08:35 AM

Thanks for the information @cbrewer . I enabled full access on the clustered JSS and sure enough, MDM commands immediately apply when initiated from there. Any idea what causes this issue? Our test environment is configured the same so I was surprised when this happened in production.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Posted on 09-26-2017 08:55 AM

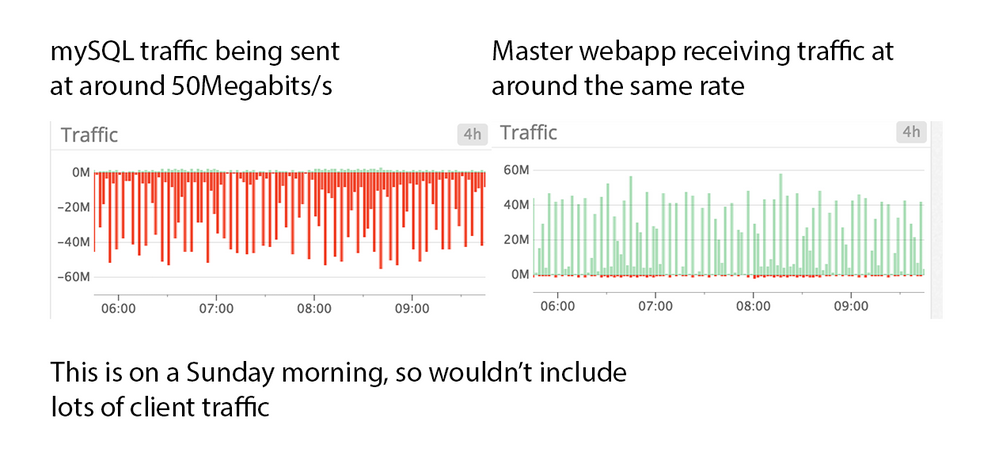

I currently have a case open with Jamf on this, but haven't found a solution. In my case, my master JSS is almost constantly receiving massive amounts of data from the database server. Do you have your database server separated? Is there an extremely high amount of traffic going between db and master JSS?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Posted on 10-01-2017 09:52 AM

We were also having performance issues after 9.101.0 and I didn't notice the increased the traffic between the mySQL server and the master web app. This is what I found from a 4 hour snapshot of my server dashboard this morning.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Posted on 10-11-2017 11:05 AM

We are seeing very similar issues with our cluster. For mobile devices, It seems that configuration profiles that we have used successfully in 9.99 are not releasing the devices in 9.101 . It is extremely difficult to get some devices to refresh the inventory without resetting their settings. We are also seeing a huge problem with lost mode not releasing as well. For our computers, when setting up devices using prestage, it has become inconsistent with setting up an Admin account. They suggest removing this from my prestage, but I really use this regularly. I have tried the above suggestion, and will see what happens, but the configuration profile issue with the iPads has not been resolved. I'm going to open a ticket with Jamf for this today..